Kubernetes Gateway API: when Ingress isn't enough and what to use instead

If you’ve been running Kubernetes workloads, chances are you’ve used Ingress to expose HTTP services and define routing rules. For many teams, it handled basic routing requirements reliably.

Before Ingress became common, exposing applications often meant creating a separate Service of type LoadBalancer for each workload. In cloud environments, that translated into provisioning a new external load balancer per service, which was costly, fragmented, and difficult to standardize. The introduction of the Ingress API, together with an Ingress Controller (such as one powered by NGINX, Traefik, or HAProxy), consolidated this model into a shared entry point. Multiple applications could now reuse a single external load balancer, with routing rules defined declaratively inside Kubernetes. This was a significant architectural improvement and, for many teams, a major step toward platform maturity.

But as platforms grow, traffic patterns become complex, teams share clusters, security requirements tighten, and traffic isn’t just HTTP. This is when Ingress starts to feel insufficient and fragile, where a small change could have unexpected consequences.

The Ingress API was designed for basic north-south HTTP routing. Layer 4 traffic, fine-grained policy control, clear separation of responsibilities were out of scope. TCP routing was never part of the standard API and required controller-specific workarounds or separate LoadBalancer services.

Growing teams increasingly relied on controller-specific annotations and extensions to stretch the Ingress model beyond its original scope. What began as simple HTTP routing gradually accumulated custom behaviors, blurring the boundary between standardized API and implementation-specific logic. At small scale, this is manageable. At larger scale, configurations become harder to evolve confidently.

If Ingress feels like it’s working against you rather than for you, that’s not a reflection of your configuration skills. It’s a sign that Kubernetes traffic management has outgrown the assumptions Ingress was built on. The good news is that the ecosystem is responding with more flexible, scalable solutions. In this article, we’ll introduce the leading alternative, Gateway API, and lay the foundation you’ll need to evaluate which implementation is right for your platform.

Kubernetes Gateway API: A More Scalable Approach to Traffic Management

Gateway API is Kubernetes’ answer to a problem you may already feel but haven’t articulated: modern traffic management needs better boundaries, shared ownership, and room to grow. It doesn’t replace Ingress; it builds on its lessons and is designed for today’s reality: diverse traffic types, multi-team infrastructure, and explicit ownership separation.

Gateway API vs Gateway Controllers: Understanding the Specification

One common misconception is treating Gateway API as a standalone tool you install. In reality, just like Ingress, it is a Kubernetes API specification. With Ingress, you define Ingress resources in the cluster, but you must install an Ingress Controller—such as the NGINX Ingress Controller—to translate those resources into actual traffic behavior. Gateway API follows the same pattern: it defines standardized resources, while a Gateway controller implements them.

The key difference is that Gateway API makes this separation more explicit and structured. Instead of relying heavily on controller-specific annotations and loosely defined behaviors, it introduces clearer contracts, conformance profiles, and well-defined extension points. The boundary between API and implementation is deliberate.

What the Gateway API Defines

Gateway API defines contracts: a set of resources, relationships, and behaviors for consistent traffic management. It answers critical questions:

- What is a gateway?

- How do routes attach to it?

- How are responsibilities shared across teams?

These questions translate directly into how you structure routing rules, delegate ownership across teams, and reason about traffic flow in a shared cluster.

What the contracts don’t describe is how traffic is actually processed; that’s the implementation’s job.

How Implementations Work

A Gateway API implementation (or controller) is a component that watches Gateway API resources and turns them into real traffic behavior, for example, configuring an underlying data plane such as Envoy, NGINX, or similar. Different implementations support the same resources but vary in performance, extensibility, and feature depth.

This separation is intentional. Ingress already supported controllers, but the challenge was that the core API covered only basic HTTP routing. Advanced capabilities—custom headers, authentication, TCP handling, and traffic policies—were often exposed through controller-specific annotations rather than standardized resources. The result was portability in theory but fragmentation in practice. Switching controllers frequently meant rewriting annotations and reinterpreting behavior.

Gateway API addresses this by elevating common traffic management needs into first-class resources such as Gateway, HTTPRoute, and TCPRoute. Instead of relying on ad-hoc annotations, most requirements are now expressed through structured, portable APIs. Extensions still exist, but they are formalized rather than improvised.

Think of it like Kubernetes itself: the platform defines what Deployments and Services are, but the underlying container runtime or CNI that processes them can differ. Gateway API works the same way. Gateway API works the same way. Multiple controllers exist, backed by different proxies or networking stacks. They all “speak” the same API but behave differently under the hood. The specification defines the contract; the controller fulfills it.The real question becomes: Which Gateway API implementation fits my platform’s needs today and will scale with me tomorrow?

How Gateway API Improves on Ingress

Now that you understand what the Gateway API is, let’s look at how it improves on Ingress.

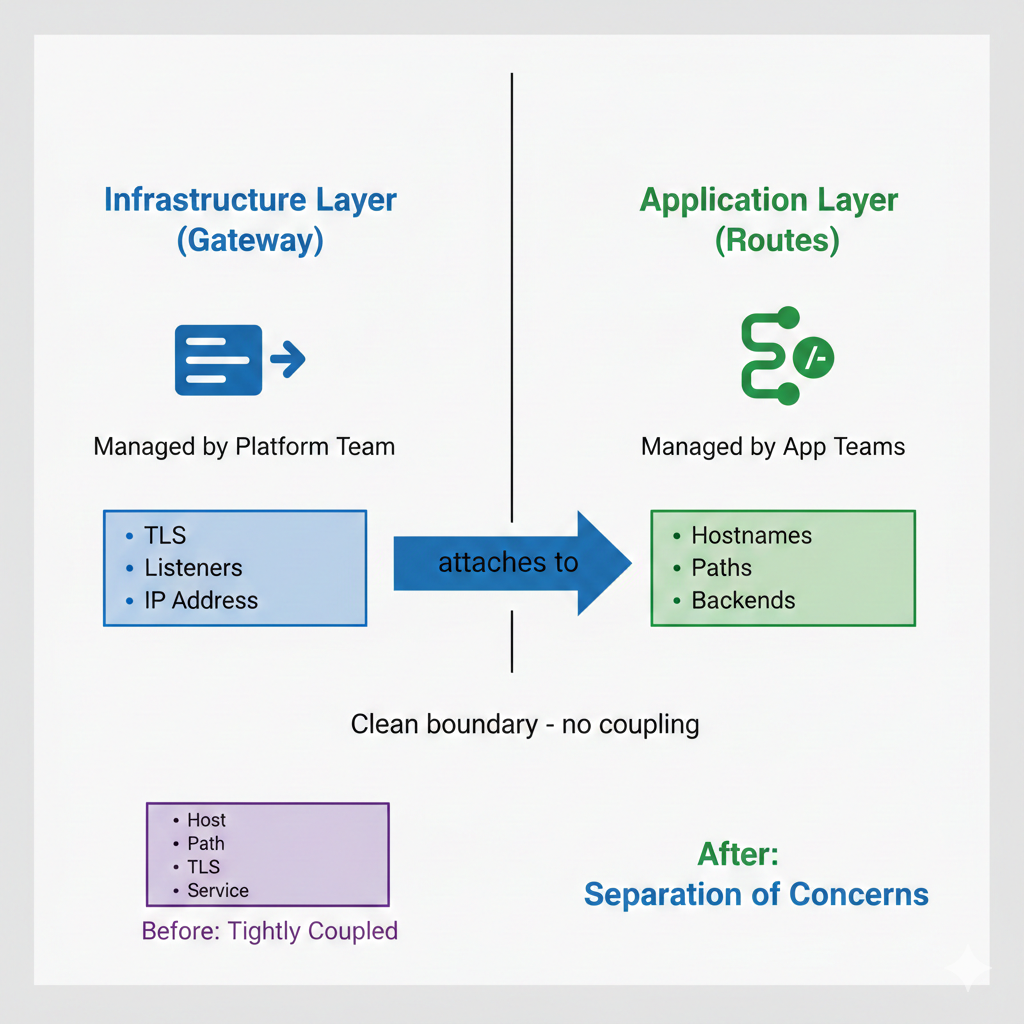

Separation of Concerns and Role-Oriented Design

Ingress already allowed application teams to define routing rules without directly managing load balancers. However, the ownership boundaries were often implicit and loosely enforced.

Gateway API formalizes a clearer, three-layer model that reflect how platform teams actually operate:

- Cluster administrators manage the underlying infrastructure and networking foundation.

- Platform teams define and operate Gateways (the shared, stable entry points into the cluster).

- Application teams define routes to those Gateways within defined guardrails.

Instead of developers and cluster admins negotiating changes directly through a single abstraction, Gateway API enables controlled delegation to the intermediate platform role. Routes can be attached, limited, or scoped according to policy, reducing the risk of unintended cross-team impact.

Built for Evolution

Unlike Ingress’s ad-hoc annotations, Gateway API provides standardized extension points. This means fewer surprises when switching controllers and easier adoption of new features over time.

Ingress gave Kubernetes a starting point for HTTP routing and Gateway API represents the next phase. It is a framework that’s easier to maintain and scale when multiple teams collaborate.

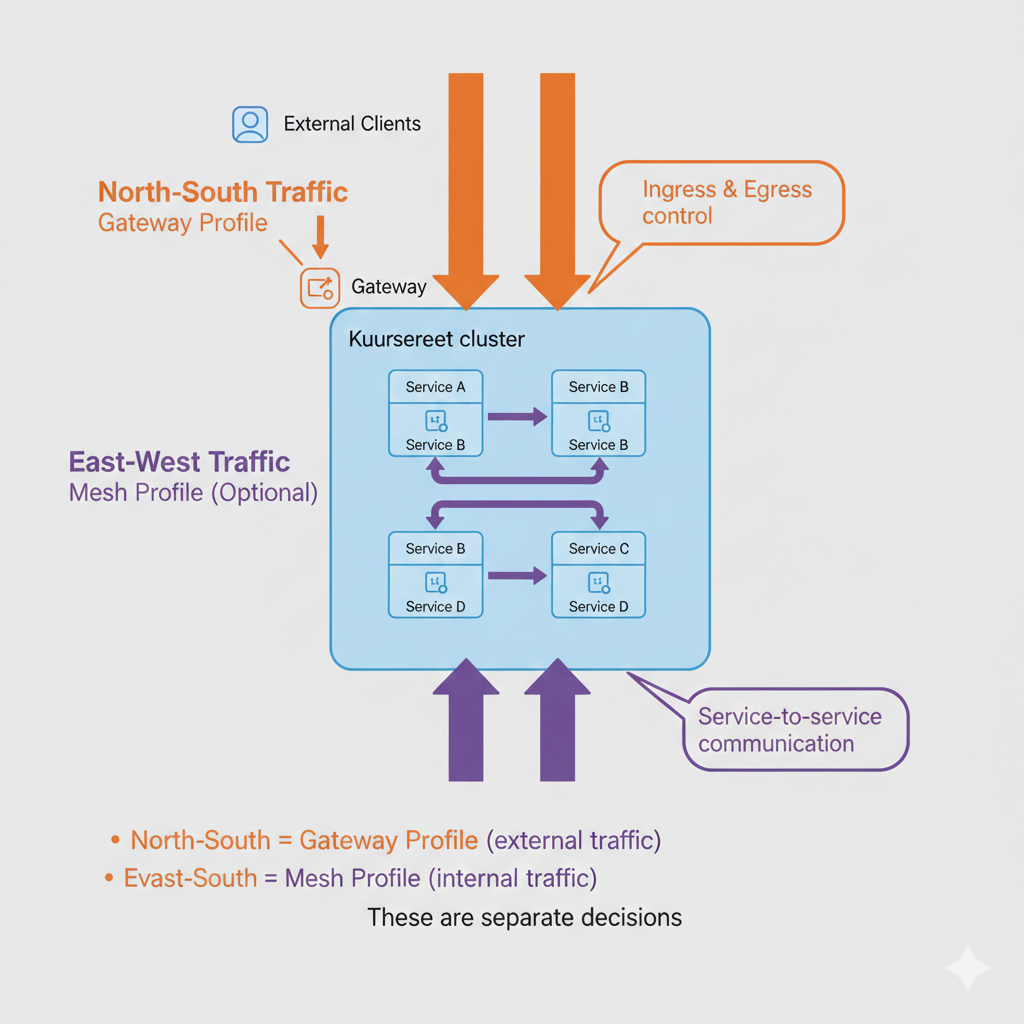

Gateway vs Mesh Traffic Management: Comparing Gateway API Profiles

Gateway API is a specification with multiple implementations. But to use it effectively, you need to grasp its two primary profiles: Gateway and Mesh. Each solves a different problem.

The Gateway Profile: North-South Traffic

The Gateway profile is primarily designed to manage traffic entering or leaving the cluster through shared entry points. It models external exposure and controlled delegation at the edge of your platform.

Key capabilities include:

- Standardization of ingress points: clearly defining shared entry points and listeners.

- Route attachment clarity: application teams can attach HTTPRoute or TCPRoute resources without needing deep knowledge of the underlying infrastructure.

- Separation of responsibilities: cluster administrators manage infrastructure, platform teams define routes within defined guardrails.

When using the Gateway profile, you define Gateways and attach Routes within this structured, role-aware model. Under the hood, the controller may still provision Service type: LoadBalancer resources to expose traffic externally. The difference is not the presence of load balancers, but the standardized API, explicit ownership, and controlled delegation it enables.

The Mesh Profile: East-West Traffic

The Mesh profile focuses on service-to-service communication and policy enforcement inside the cluster (east-west traffic) Where the Gateway profile handles how traffic enters your platform, the Mesh profile governs how workloads talk to one another once inside it.

Key capabilities include:

- Traffic shaping: retries, circuit breaking, and weighted routing expressed through standard Gateway API resources rather than mesh-specific APIs.

- Security enforcement: mutual TLS (mTLS) and fine-grained authorization policies.

- Observability: consistent telemetry and traffic visibility across services.

These are powerful capabilities for complex, multi-team, or regulated environments, but they come with meaningful operational overhead:

- Extra configuration layers.Additional cost of managing proxy infrastructure at the service level.

- Additional control planes in side-car based meshes.

But not every cluster needs them. Only when the internal complexity demands it, they are worth the cost.

Why this Distinction Matters

Without this separation, you might assume the Gateway API requires a full service mesh. It doesn’t. Each profile answers a different question:

- Gateway Profile: “How should traffic enter my cluster in a scalable, role-based, future-proof way?”

- Mesh Profile: “How should services within my cluster communicate securely and reliably?”

You can implement the Gateway profile without committing to a service mesh. It’s strategic—and often recommended—to defer Mesh decisions until a clear need arises.

When to Consider Mesh

Mesh adoption depends on organizational maturity, security requirements, service topology, and operational capacity; factors that vary wildly between platforms. Many teams stage these decisions; they stabilize north-south traffic first, then evaluate mesh only if internal complexity justifies it.

For most growing platforms, modernizing ingress is the immediate and measurable step forward. Once that foundation is stable, deeper service-to-service concerns can be evaluated with greater clarity and less architectural risk.

Conclusion

Ingress solved a real problem. It replaced a fragmented landscape of per-service load balancers with a single, declarative entry point and for a long time, that was enough.

But platforms don’t stay still.

Teams grow, traffic diversifies, security requirements tighten, and what once felt like a solid foundation starts to show its cracks.

Gateway API isn’t just a newer version of the same idea. It’s a rethinking of how traffic management should work in a world where infrastructure is shared, ownership is distributed, and the cost of a misconfiguration ripples across teams. The separation of concerns, the role-oriented design, the distinction between Gateway and Mesh are answers to problems you’ve likely already run into.

If your goal is to replace a legacy Ingress Controller, the Gateway profile of Gateway API is the natural evolution. Mesh addresses a different class of internal service-to-service communication and advanced policy enforcement. It’s a separate concern that should be evaluated independently and adopted when internal complexity demands it.

Understanding the specification is only half the battle. Gateway API is a contract, not an implementation and the controller you choose to fulfill that contract will shape your platform’s operational reality in ways the API itself doesn’t dictate. Performance, extensibility, maturity, and the hidden complexity of day-two operations all vary significantly between implementations.

At AstroKube, we have spent considerable time evaluating and operating the leading Gateway API implementations across real platform engineering scenarios. In the next article, we break down exactly the differences among them, so you can choose with confidence rather than guesswork.